Politische Beteiligung – eine Erfahrung

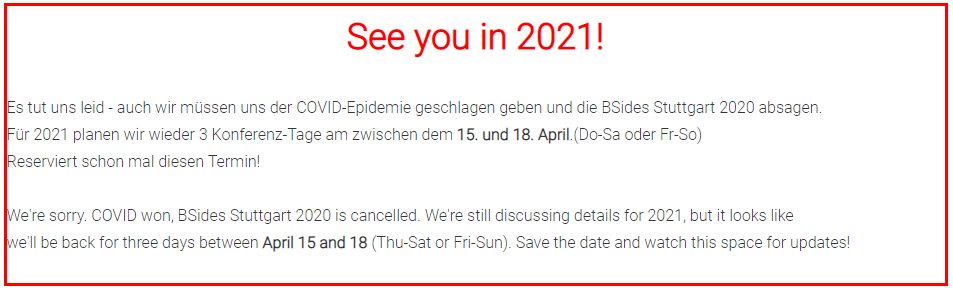

Politik ist mir schon immer suspekt gewesen, aber ab und zu juckt es einen und man sagt sich, man kann doch nicht immer nur schimpfen und sich ärgern, man muss doch dann einfach etwas tun. Genau das hatte ich mir in 2020, Frühjahr 2021, also während dem Großen C, auch gesagt. Daher hatte ich mich damals entschlossen doch zu versuchen, mich politisch zu engagieren. Das ist jetzt ein Weilchen her, daher möchte ich es heute reflektieren.

Natürlich war mich auch klar, dass ich einer etablierten Partei, jedwelcher Couleur, keine Chance habe etwas zu bewegen. Daher habe ich mir damals eine neue kleine junge Partei herausgesucht deren ursprüngliche Werte mir so ziemlich zugesagt hatten, nämlich Volt.

Ich bin überzeugter Europäer (nicht EU aber Europa, dazu später mehr), mir ist Umweltschutz (nicht Klima) und biologische Landwirtschaft wichtig, also schien mir das ganz gut zu passen. Die Partei war sehr jung und motiviert, also kurzum bin ich gleich in die Partei eingetreten als ordentliches Mitglied.

Man wird in die Ortsgruppe aufgenommen, bekommt Zugang zu den Tools, Mitgliedernummer etc. Das war alles sehr spannend und interessant zu sehen, wie eine moderne politische Partei sich intern organisiert. Es gab interne Foren zu allen möglichen Themen, Events zum Kennenlernen und Diskutieren und das fand ich extrem spannend, auch wenn der Zeitaufwand doch neben meinen anderen Aktivitäten wie Full-Time-Job, Immobilien- und Aktien-Investments, YouTube-Kanal etc. doch recht hoch war.

Ich hatte mir damals auch überlegt, wo kann ich am besten etwas beitragen, natürlich Finanz- und Wirtschaftspolitik, das was mich primär interessierte auch im Rahmen meines YouTube-Kanals (Outside-Invest). Also mich in Forums-Gruppen zu diesen Themen, auch Renten-Politik etc. eingeschrieben und angefangen mit Gleichgesinnten zu diskutieren und zuzuhören. Damals konnte ich sehr interessante Leute kennenlernen aus ganz Deutschland die offensichtlich das selbe Mindset hatten wie ich. Wir wollten Volt zu einer Partei machen, die zwar grün (für Umweltschutz), Europa-freundlich (Reformation der EU) aber auch Wirtschafts-liberal und vernünftig ist. Eine Partei, die eben damals, wie auch heute fehlt Eine Partei die eher links-liberal und nicht konservativ aber eben auch nicht sozialistisch ist. Genau so stand das auch im ersten Manifesto, welches von den Gründern von Volt (2017 von Andrea Venzon, Colombe Cahen-Salvador und Damian Boeselager) geschrieben wurde, was mich damals auch ansprach.

Welch schöne Illusion.

Schnell wurde jedoch klar, dass diese nette Gruppe doch eher eine Minderheit war, eine Minderheit mit wirtschaftlicher und finanziellen Kompetenz, eher aus der Mitte der Gesellschaft, liberal und nicht extrem. Aber eben leider eine Minderheit in der Partei. Schnell wurde klar, dass die Mehrheit der Partei und vor allem die führenden Köpfen auf der Deutschen- (nicht europäischen) Ebene die Partei in eine ganz andere Richtung drückten, nämlich das was Volt heute ist. Eine Europa-freundliche aber eben auch extrem linke, voke und sozialistische Partei.

So eine Partei braucht Deutschland zumindest aber überhaupt nicht, weil davon gibt es schon genug: Die Linke, die SPD, die Grünen etc. Und sozialistisches Gedankengut ist eben für mich und meine damalige Wirtschaftsgruppe überhaupt nicht was wir für sinnvoll oder erstrebenswert gehalten haben.

Individuelle Freiheit ist das absolut höchste Gut, nicht Gleichheit und damit Unterdrückung des Bürgers wie es der grüne Sozialismus anstrebt. Kapitalismus in einer sozialen liberalen Form ist der einzige jemals funktionierende Mechanismus, der die Freiheit der Bürger erlaubt mit einem Staat, der nur die Grenzen setzt und die Vorraussetzungen für die Wirtschaft und die Bürger schafft aber eben nicht meint alles selbst kontrollieren zu müssen und zu wollen. Das ist aber leider nicht die Welt, wie ihn die obigen Parteien und eben auch Volt anstreben.

Die Konsequenz, andere aus der Gruppe und schliesslich auch ich haben aufgegeben und die Partei wieder verlassen, ich erst Anfang 2022. Unter anderem auch, weil Volt auch was die Corona-Politik unverständlicherweise auf Mainstream-Linie war und ich diese Ziele und Parolen nicht mehr mittragen konnte.

Was ich noch gelernt habe, ist das ich Politik nicht für mich gemacht ist. Politik bedeutet mit Menschen zu tun zu haben, die man nicht mit vernünftigen sachlichen Argumenten überzeugen kann, weil sie eigentlich nur dunkle Rhetorik verwenden und Macht erlangen oder behalten möchte. Es geht nicht um inhaltliche Themen, um die ich mich in meinem Leben immer gekümmert hatte, der Versuch die beste Lösung für alle zu finden und umzusetzen. Das ist aber in der Politik nicht wesentlich, es geht darum seinen eigenen Standpunkt anderen aufzudrücken und möglichst viel davon in einem schlechten Kompromiss durchzusetzen.

Das ist weder etwas in dem ich gut bin, noch das meinen innersten Werten entspricht. Diese Art der Diskussionen, wo man denkt ob der Andere irgendwie noch recht bei Sinnen ist oder was in dessen Gehirn vorgeht, nichts das mit Logik, Verstand und Sinn zu tun hat. Das erzeugt nur Stress, Streit und Kopfschütteln und warum soll man sich das freiwillig und gegen Mitgliedsbeitrag auch noch antun?

Nein, ich möchte positiv denken mit vernünftigen Menschen, echte reale Probleme lösen und Werte schaffen, nicht Politik zelebrieren.

Daher ist für mich das Thema politische Beteiligung ein für allemal beendet und das Experiment gescheitert.

Wenn also die Politik in eine Richtung geht, die ich nicht mittragen kann, so wie momentan mit der besten Regierung, die wir je hatten, dann bedeutet das für mich nicht einen sinnlosen Kampf gegen Macht-Menschen zu führen der eh zu nichts führt. Sondern positiv bleiben und für mich und meine Familie das Beste zu suchen, auszuweichen statt zu kämpfen.

Ich schätze jeden, der in diesem Fall sich entscheidet dagegen anzukämpfen um die Vernunft doch noch zu retten, aber ich habe mich dagegen entschieden um meine persönliche individuelle geistige Gesundheit und Freiheit ist wertvoller als das Ziel andere vom richtigen Weg zu überzeugen, die keine Unterstützung dabei wollen.

Es lebe die individuelle Freiheit und Wohlstand, das wichtigste demokratische Grundrecht des Bürgers!

Die meisten Menschen würden sagen, dass die auf der Hut sind und nicht alles glauben was ihnen jemand erzählt. Meist ist dies aber nur inhaltlich der Fall, man interpretiert die Aussagen eines anderen intuitiv. Was uns die Schule oder Universität leider nicht lehr ist die Argumente des Gegenüber zu analysieren und ihre Absicht zu durchschauen um darauf angepasst reagieren zu können. Die Bildung lehrt uns kein Critical Thinking.

Die meisten Menschen würden sagen, dass die auf der Hut sind und nicht alles glauben was ihnen jemand erzählt. Meist ist dies aber nur inhaltlich der Fall, man interpretiert die Aussagen eines anderen intuitiv. Was uns die Schule oder Universität leider nicht lehr ist die Argumente des Gegenüber zu analysieren und ihre Absicht zu durchschauen um darauf angepasst reagieren zu können. Die Bildung lehrt uns kein Critical Thinking.

So a couple of weeks ago I bought a book as I stumbled over it on Amazon. This week I started reading “

So a couple of weeks ago I bought a book as I stumbled over it on Amazon. This week I started reading “

That is why I now started in September 2020 my own Youtube channel, called “

That is why I now started in September 2020 my own Youtube channel, called “